I shipped a one-line fix to a prompt last week. It made the AI better at one specific thing the customer had complained about. Twenty minutes later I found out it had quietly broken three other things that nobody had complained about — yet.

If you’ve spent any real time building with LLMs, you already know this story. If you haven’t, here’s the part that nobody tells you in the YouTube tutorials: AI software development is nothing like traditional software engineering, and the part that breaks first is your assumption that you can fix one thing at a time.

The Unit of Change Is the Whole System

In traditional software engineering, you have a function. The function has inputs and outputs. You write a test that pins the behavior. If the test passes, the function works. If you change the function, you re-run the test, and if it still passes, you ship. Other functions in the system don’t care, because they only see the contract — the inputs and outputs — not the internals. That’s also why I’ve argued before, in the context of startup engineering, that “perfect code” can be a luxury you can’t afford — but at least in classical code, you have the option.

Now look at an AI feature. The “function” is a prompt plus a model. The “inputs” are the user’s message plus whatever context you’ve stuffed in. The “output” is text the model generated — which means it depends on every token in the prompt, every example you included, every instruction you added, the temperature, the model version, and the phase of the moon. There is no contract. There is only behavior, and behavior is emergent.

When you change one line in that prompt — “always answer in JSON” becomes “always answer in JSON with a top-level result key” — you have not modified one function. You have edited the entire system. The model now reads every input differently. Every output may shift. Every downstream component that parses, evaluates, or routes on that output may now see something new.

This is the part that breaks people coming from a traditional software engineering background. It’s not that AI software development is harder — it’s that the unit of change has gotten bigger, silently, and your old testing instincts haven’t caught up.

Fixing One AI Bug Means Re-Testing Everything

So what does it actually mean to fix an AI bug?

In a normal codebase, if a user reports that a button is misaligned on Safari, you fix the CSS, run the visual regression suite, and you’re done. You don’t need to re-test login, checkout, search, and notifications. They live in their own corners of the system. Touching one corner doesn’t move the others.

In an AI system, every “corner” is wired into the same prompt and the same model. A customer reports the AI is being too pushy in upsells. You soften the system prompt. Now the AI is also softer when it should be firm — it stops collecting required information, hedges on critical answers, lets users wander out of the flow. You didn’t just fix the upsell behavior. You shifted the model’s overall personality, which shows up in every conversation it has, including the ones you weren’t trying to change.

This is why AI software testing cannot be a checklist of unit tests you ran once and forgot about. Every prompt change is, in effect, a global change. Every bug fix is a release. Every release needs full regression coverage. The mindset has to shift from “did I break this one thing?” to “did I break anything?“

You Need AI to Test AI

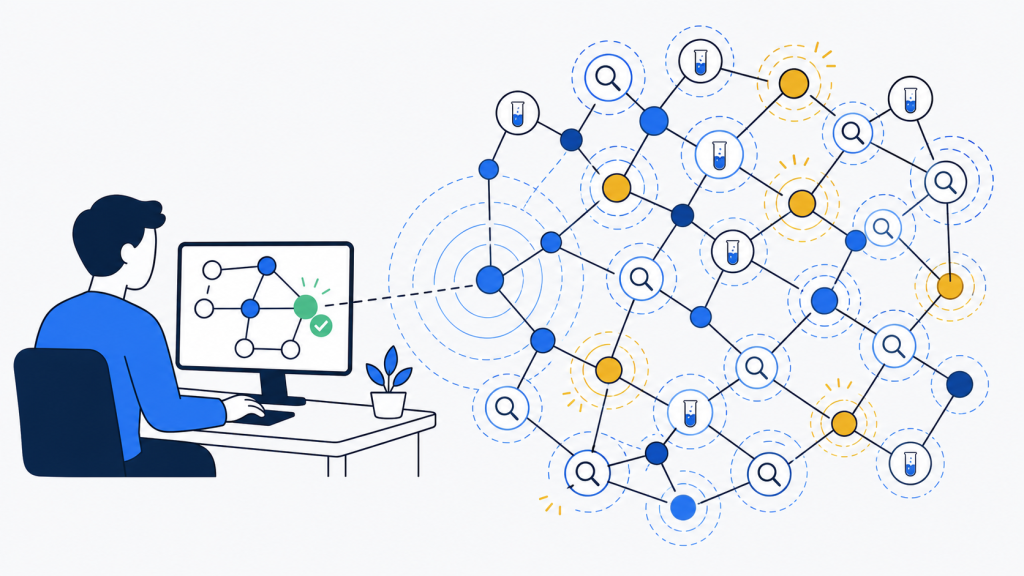

This is where it gets weird, and where AI software development really departs from traditional engineering. The only practical way to run a full regression on an AI system is to use another AI to do it.

You build a test harness that does what a human QA team would do, but at machine speed. It plays the role of dozens or hundreds of different users — the angry customer, the confused first-timer, the user who tries to jailbreak the system, the user who answers in incomplete sentences, the user who speaks a different language than your default. It runs each scenario against your production prompt. It captures the outputs. Then a judge model — usually a stronger, slower model than the one in production — grades each output on the criteria that matter: Did the AI complete the task? Did it stay on brand? Did it leak data it shouldn’t have? Did it follow the format?

This is what people now call LLM evals. Some people call it AI software testing. Some call it LLM observability when they’re focused on the production side rather than the pre-release side. The terminology is still settling, but the underlying motion is the same: you cannot manually verify the behavior of a non-deterministic system at the scale and frequency that AI software development requires, so you build AI systems to verify your AI systems.

This is not optional. You cannot ship AI features at a real rate of iteration without it. At Viva, where the entire product surface is an AI that has to act safely on behalf of a medical or dental practice, this isn’t a nice-to-have — every prompt change is graded by an automated suite before it ever touches a real call. The teams that try to do it manually end up either shipping too rarely (because every release is a four-day QA marathon) or shipping too recklessly (because they only catch regressions when customers complain). Neither is acceptable in a serious product.

Prompt Engineering Is Half the Job — Eval Engineering Is the Other Half

The whole conversation about prompt engineering missed something. Writing the prompt is the easy part. Anyone with a thesaurus and ten minutes can write a passable prompt. The hard part — the part that separates a working AI product from a demo — is the eval suite that proves the prompt does what you think it does, today, and will catch you when it stops doing that tomorrow.

A good AI engineering team spends as much time on its evals as on its prompts. The evals are the assets. The prompts are throwaway — you’ll rewrite them ten times before the product is mature. The evals are what survive across rewrites, what let you compare model versions when a new one comes out, what give you the confidence to actually ship. And unlike prompts — which are increasingly easy to write because the models help you write them — evals require domain judgment, including the kind of judgment about edge cases and adversarial inputs that I touched on in the post about data poisoning and model manipulation.

I will say this plainly because it took me a while to internalize it: if you’re building AI software and you don’t have an eval suite, you don’t have a product. You have a prototype that occasionally works.

What This Means for How You Build AI Software

A few practical shifts I’d recommend to anyone moving from traditional software engineering into AI software development:

- Stop thinking of prompts as code. Prompts are configuration that changes the behavior of an entire dependency. Treat them with the same care you’d treat a database migration, not a CSS tweak.

- Build the eval harness before you scale the feature. The first version can be ten scenarios graded by a judge model and a spreadsheet. It doesn’t matter — what matters is that you have something to run before you ship.

- Track scores over time. Every prompt change, every model upgrade, every new context window — run the suite, log the score, look at where it went up and down. The shape of those numbers is your real release notes.

- Use a stronger model as the judge. If your production model is Sonnet, judge with Opus. If you’re on a smaller model for cost, judge with a frontier model. The judge is allowed to be slow and expensive because you don’t run it on every user request, only on your test set.

- Accept that AI software testing is part of the runtime. Production monitoring of LLM outputs — LLM observability — is not separate from your eval suite. It’s the same instinct, applied to live traffic.

The companies winning at AI software development right now aren’t the ones with the cleverest prompts. They’re the ones who internalized early that traditional software engineering doesn’t work for this, built the testing discipline to match, and stopped pretending that “the AI just got better” is an explanation for anything.

One-line fixes still exist in this world. But the test you run afterward is no longer one line. It’s the whole system, every time. That is the cost of building with a non-deterministic component at the center of your product, and the sooner you stop fighting it, the sooner you actually ship.